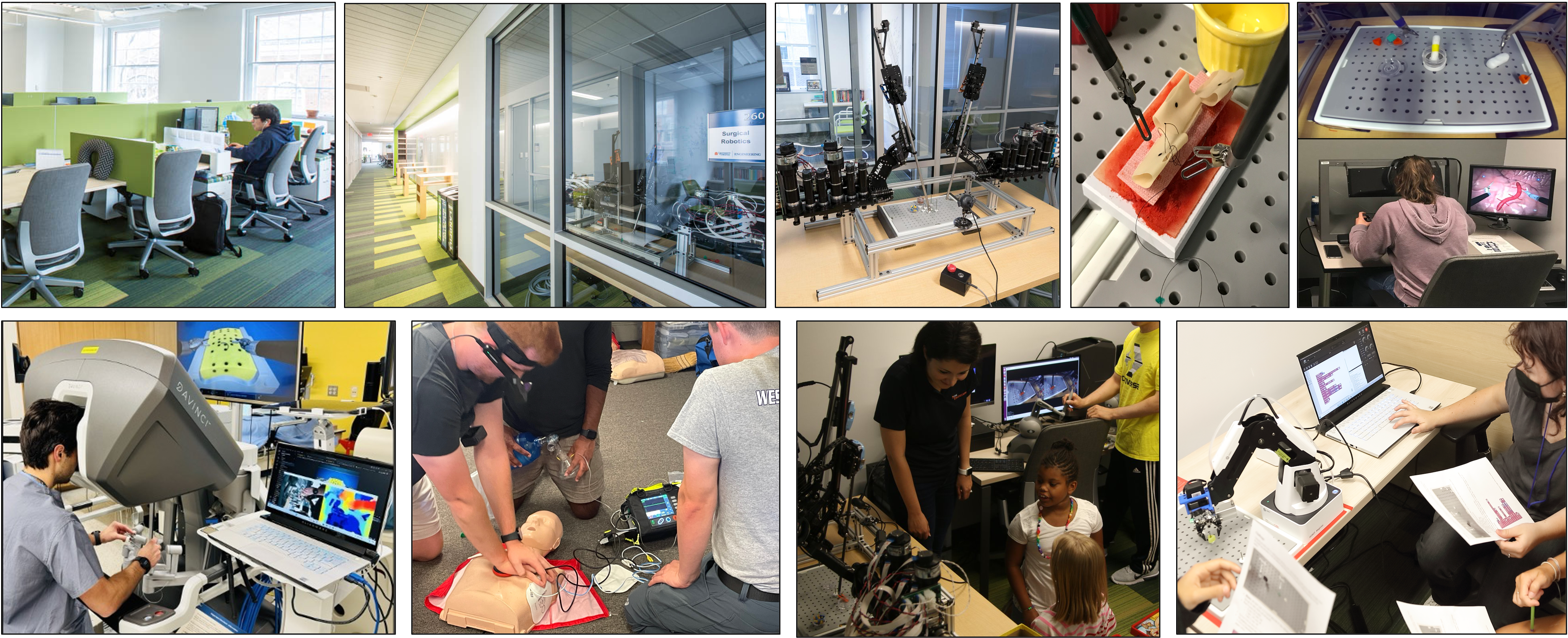

At UVA Dependable Systems & Analytics group, we build the next-generation of Resilient Cyber-Physical Systems. We take a multidisciplinary approach to safety and security assurance of CPS, with applications to medical devices, surgical robots, and autonomous systems. By leveraging techniques from dependable computing and fault-tolerance, machine learning, and real-time systems, we develop integrated model and data-driven methods, realistic testbeds and simulators, and datasets to analyze incidents, assess resilience to faults and attacks, and enable runtime monitoring for detection and mitigation of adverse events.

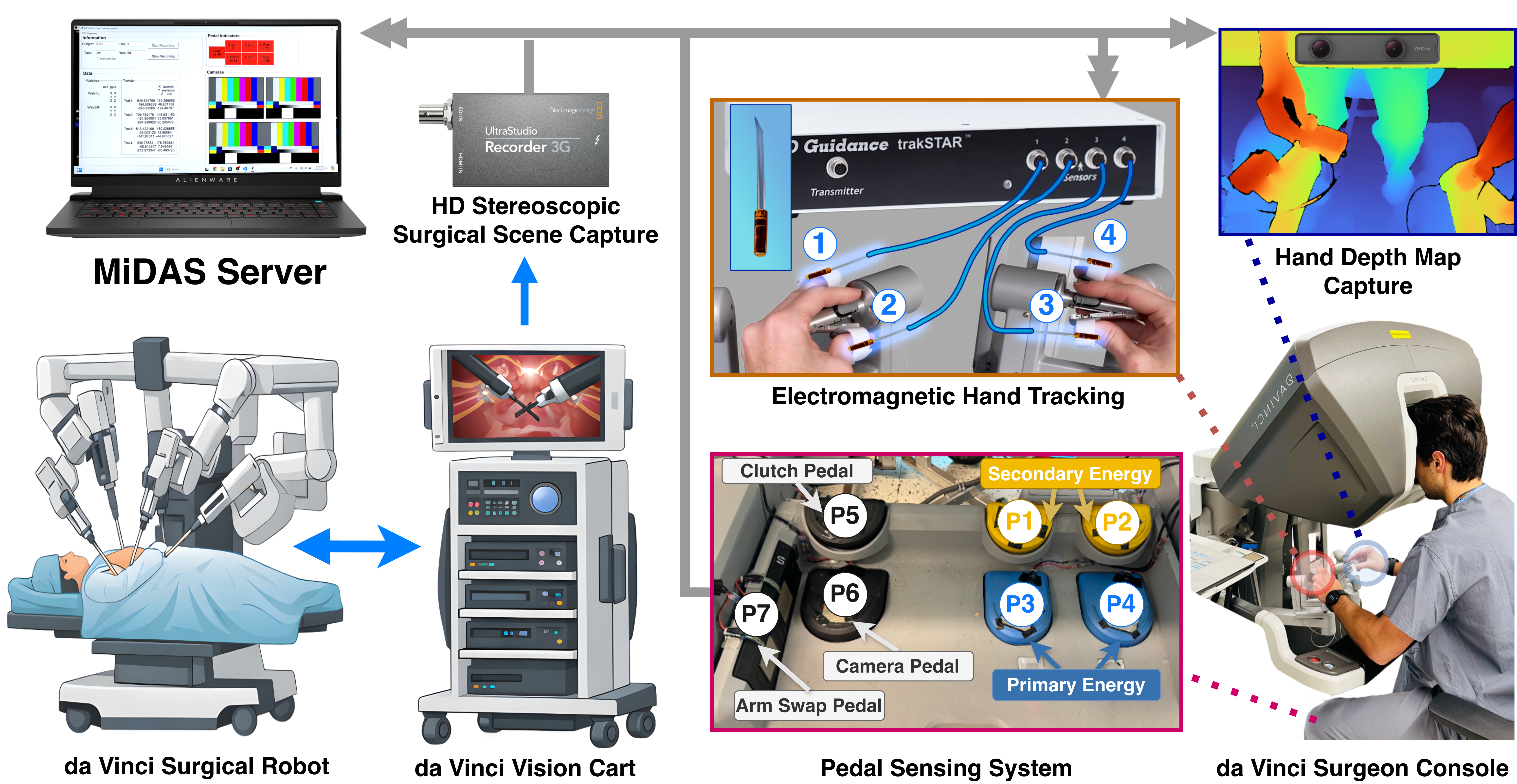

MiDAS: Multimodal Data Acquisition System for Robot-Assisted Surgery

This project introduces MiDAS, an open-source multimodal data acquisition system designed to support research in robot-assisted minimally invasive surgery (RMIS). The paper is currently under review at a medical robotics journal. The system enables synchronized collection of surgical data without requiring access to proprietary robot telemetry, making it possible to study surgical workflows and develop AI-driven assistance systems across multiple robotic platforms.

- Platform-agnostic multimodal sensing system with a non-invasive data collection framework that captures synchronized surgical data including electromagnetic hand tracking, RGB-D hand motion capture, stereo surgical video, and foot pedal interaction signals without modifying robotic hardware.

- Cross-platform data collection on surgical robots validated on the open-source Raven-II surgical robot and the clinical da Vinci Xi system, showing that external sensing modalities can approximate internal robot kinematics and capture surgeon interaction dynamics.

- Multimodal surgical activity datasets with annotated data from robotic surgery training tasks, including peg transfer experiments and high-fidelity suturing procedures on realistic tissue simulation models during a surgical training bootcamp.

- Benchmarks for surgical gesture recognition evaluating state-of-the-art temporal models for gesture recognition using multimodal signals, demonstrating performance comparable to proprietary robot telemetry.

By providing an open-source data acquisition system and multimodal datasets across multiple surgical platforms, MiDAS aims to reduce barriers to surgical AI research and accelerate the development of intelligent systems for surgical workflow understanding, skill assessment, and cognitive assistance in robot-assisted surgery.

EMSDialog: Synthetic Multi-person EMS Dialogue Generation

EMSDialog generates synthetic multi-person emergency medical service dialogues from electronic patient care reports using multi-LLM agents. The paper is accepted to ACL Findings 2026.

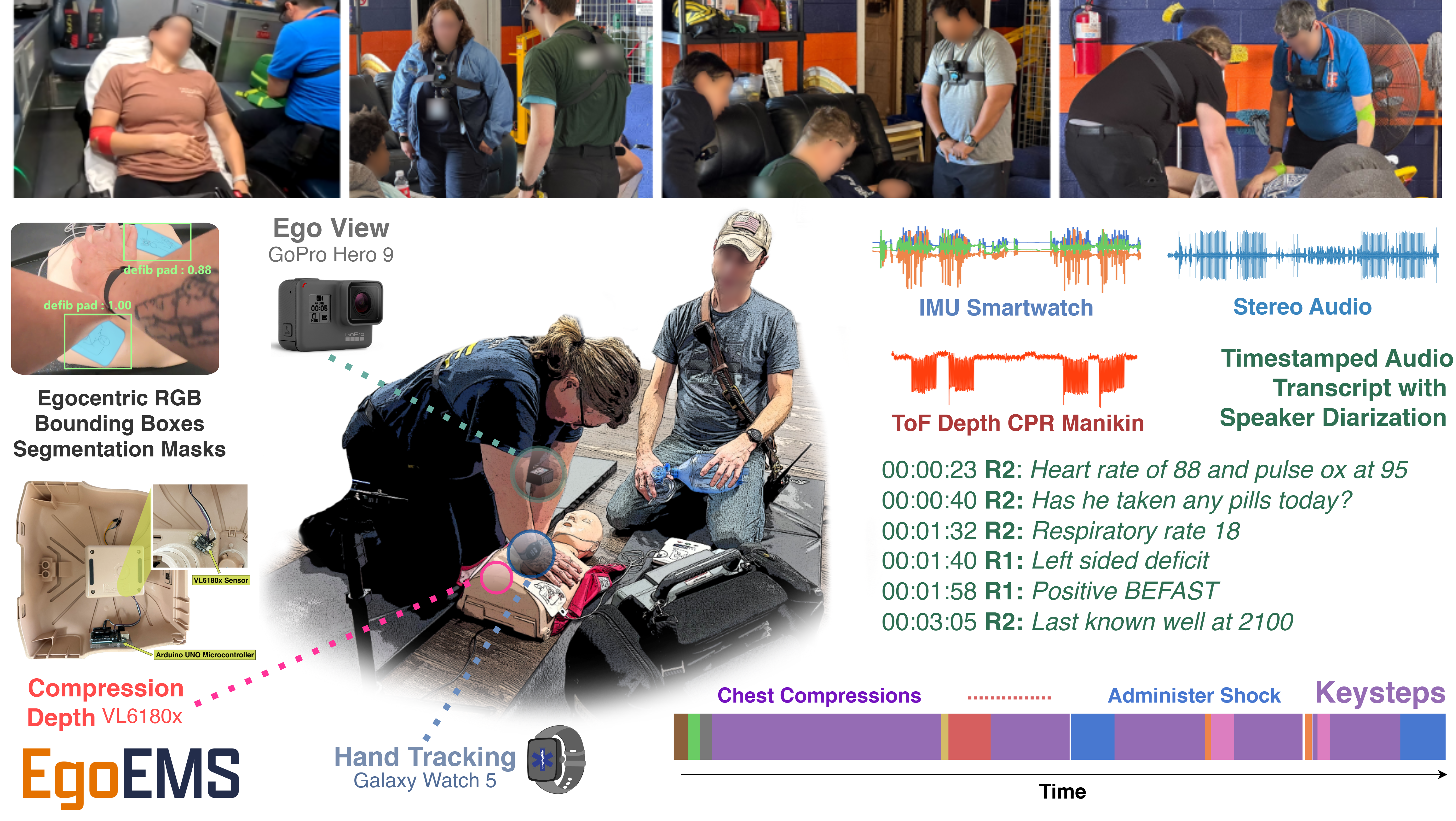

EgoEMS: Multimodal Egocentric Dataset for Cognitive Assistance in Emergency Medical Services

This project introduces EgoEMS, the first high-fidelity multimodal egocentric dataset designed to support the development of AI systems that assist Emergency Medical Services (EMS) responders during critical incidents. The dataset captures realistic emergency scenarios with synchronized multimodal sensing to enable research on real-time cognitive assistance, activity recognition, and performance feedback for first responders.

- Multimodal egocentric data collection via a synchronized sensing platform capturing responder egocentric video, conversational audio, smartwatch IMU motion signals, and CPR quality measurements using low-cost off-the-shelf devices.

- Realistic EMS scenario simulations with over 20 hours of data across 233 simulated emergency scenarios involving 62 participants performing interventions such as CPR, defibrillation, ventilation, stroke assessment, and ECG monitoring.

- Fine-grained procedural annotations including an EMS activity taxonomy with 67 keysteps across 9 medical interventions, timestamped audio transcripts, speaker diarization, object detection annotations, and segmentation masks for medical tools used during procedures.

- Benchmarks for AI cognitive assistants spanning keystep classification, keystep segmentation, and CPR quality estimation using multimodal data.

By providing realistic multimodal data and standardized benchmarks, EgoEMS aims to accelerate research on AI-powered cognitive assistants that improve situational awareness, protocol adherence, and responder training in emergency medical environments, ultimately contributing to better patient outcomes.

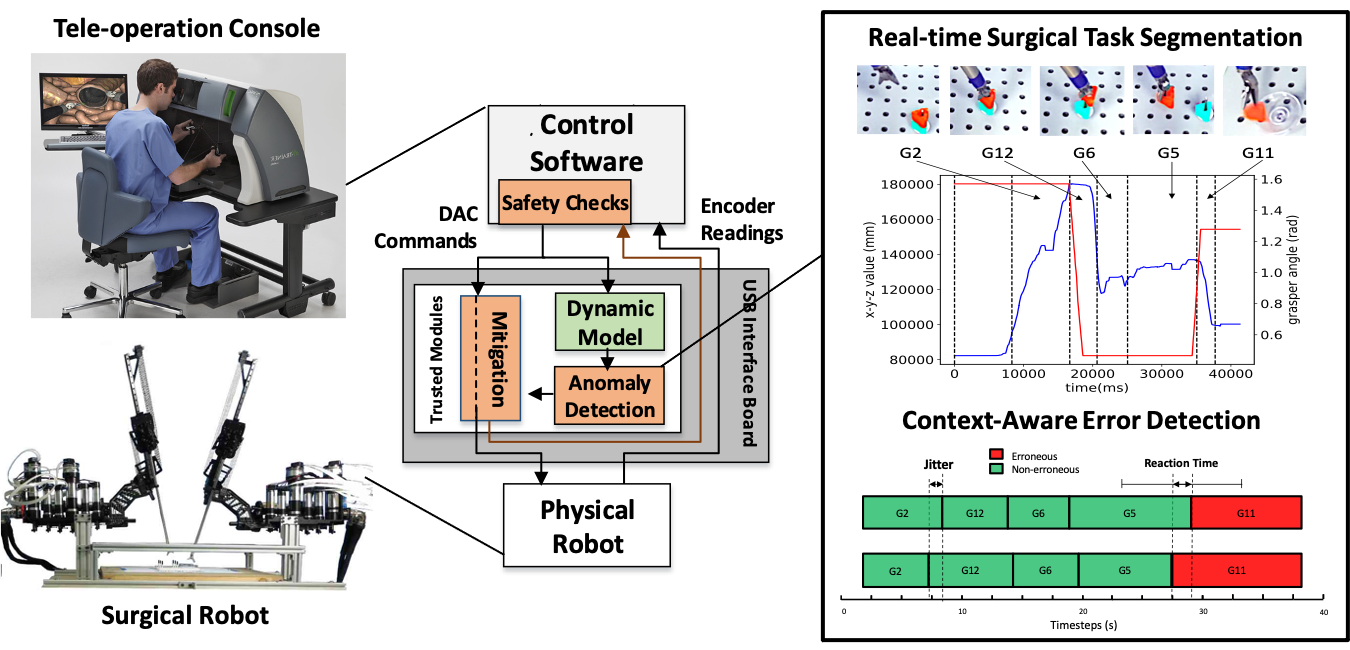

Resilient Cyber-Physical Systems for Robotic Surgery

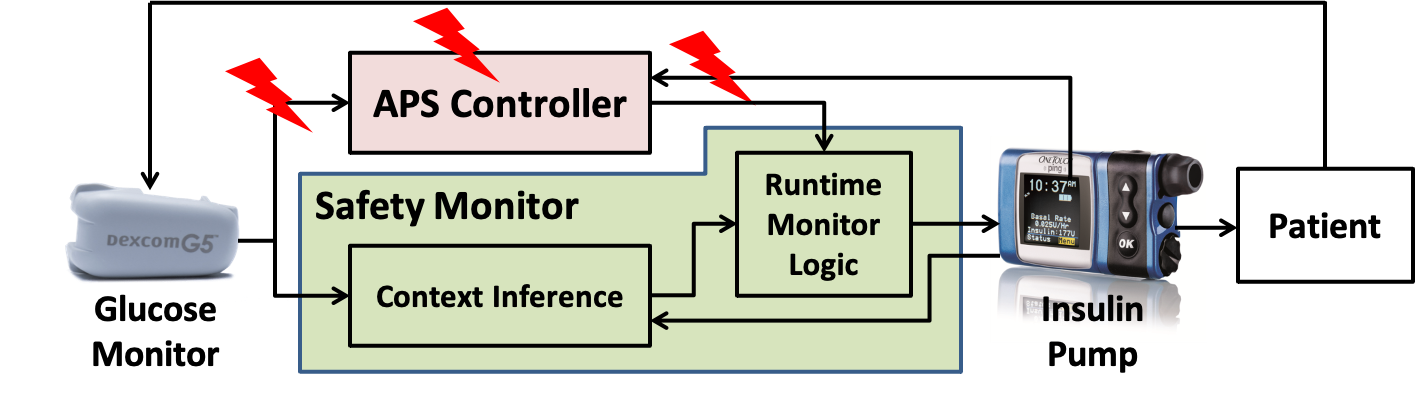

This project aims to enhance the safety and efficiency of robot-assisted surgical procedures by combining systematic modeling of safety incidents, resilience assessment under faults and cyber threats, continuous context-aware monitoring, and simulation-based training for surgical teams:

- Modeling and analysis of safety incidents by considering the interactions among cyber and physical system components and human operators;

- Resilience assessment of the robotic surgical systems in the presence of accidental system faults, cyber attacks, and human errors;

- Continuous context-aware monitoring for early detection of potential safety and security violations;

- Simulation-based safety training to prepare surgical teams on dealing with unexpected events during surgery.

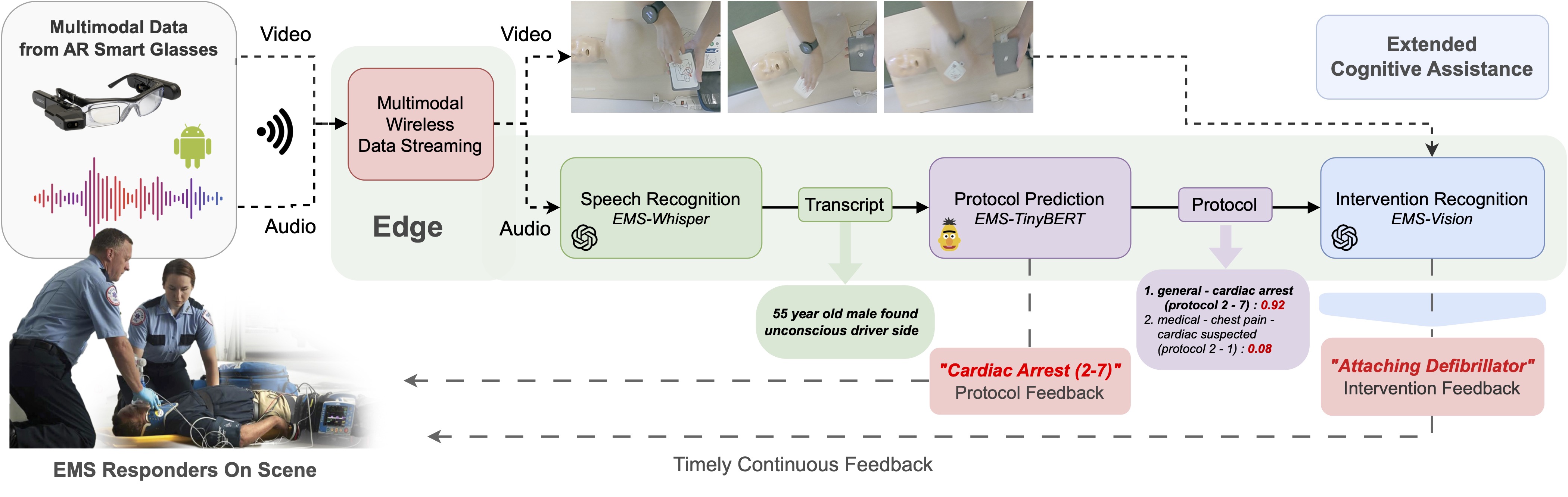

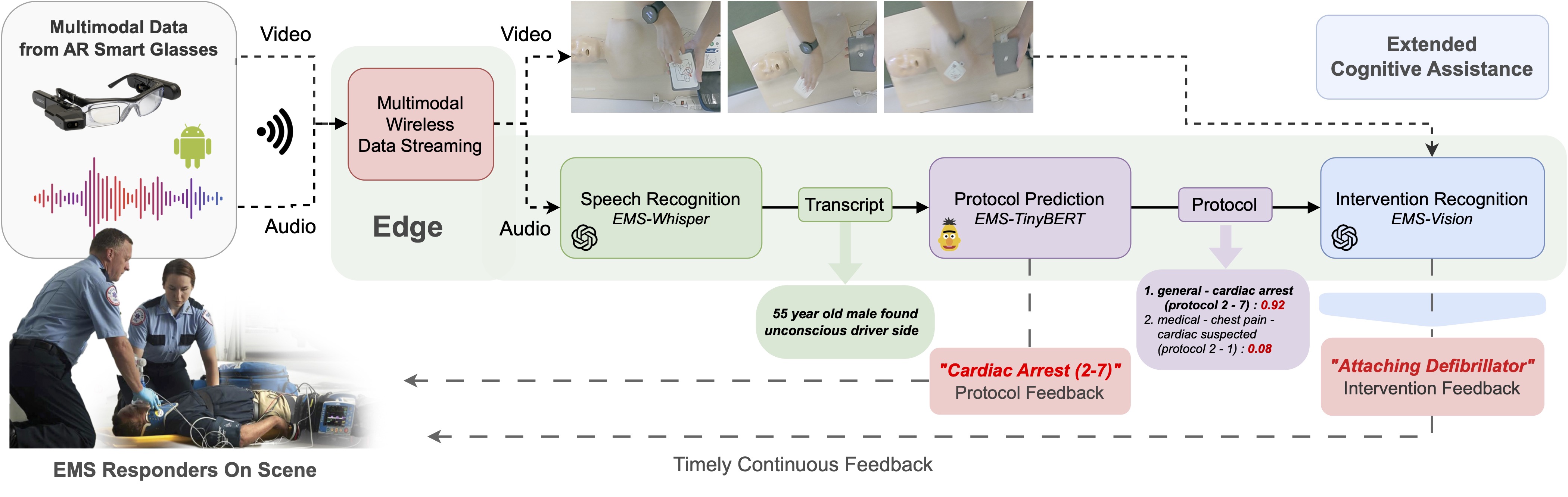

Cognitive Assistant Systems for Emergency Response

This project focuses on developing the next generation of first responder technologies that enhance situational awareness and safety in emergency response. The central goal is to design a wearable cognitive assistant system that integrates the following key components:

- Resilient data analytics for collecting heterogeneous data streams from the incident scene, aggregating them with knowledge bases and publicly available sources, and transforming the results into accurate, actionable feedback for first responders;

- Anytime real-time sensing and edge computing resources that are dynamically optimized to enable continuous data processing on responder-worn devices, even under unexpected events such as hardware failures or network disconnections.

Resilience-by-Construction Design of Medical Devices

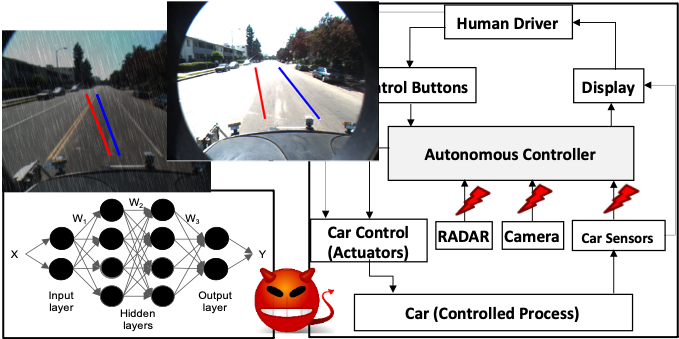

Dependable and Secure Artificial Intelligence for Autonomous Vehicles